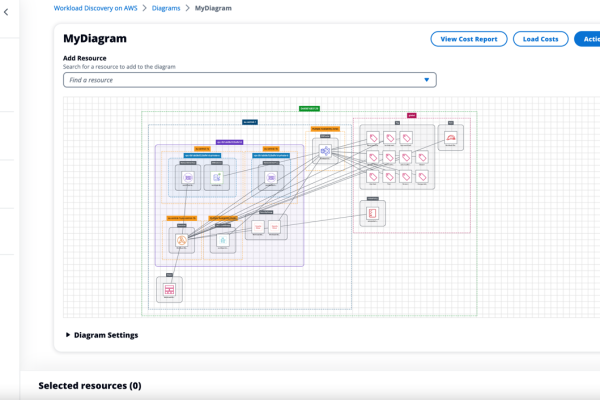

Game Day Every Day: How AWS Prepares for the Unexpected

I had the pleasure to speak to John Gaffney from PYMNTS.com about resiliences on AWS, and how we help our customers to build a resilience posture that maps business requirements. Full interview here: https://www.pymnts.com/news/security-and-risk/2024/chaos-engineering-aws-chief-technologist-on-preparing-for-the-unexpected/